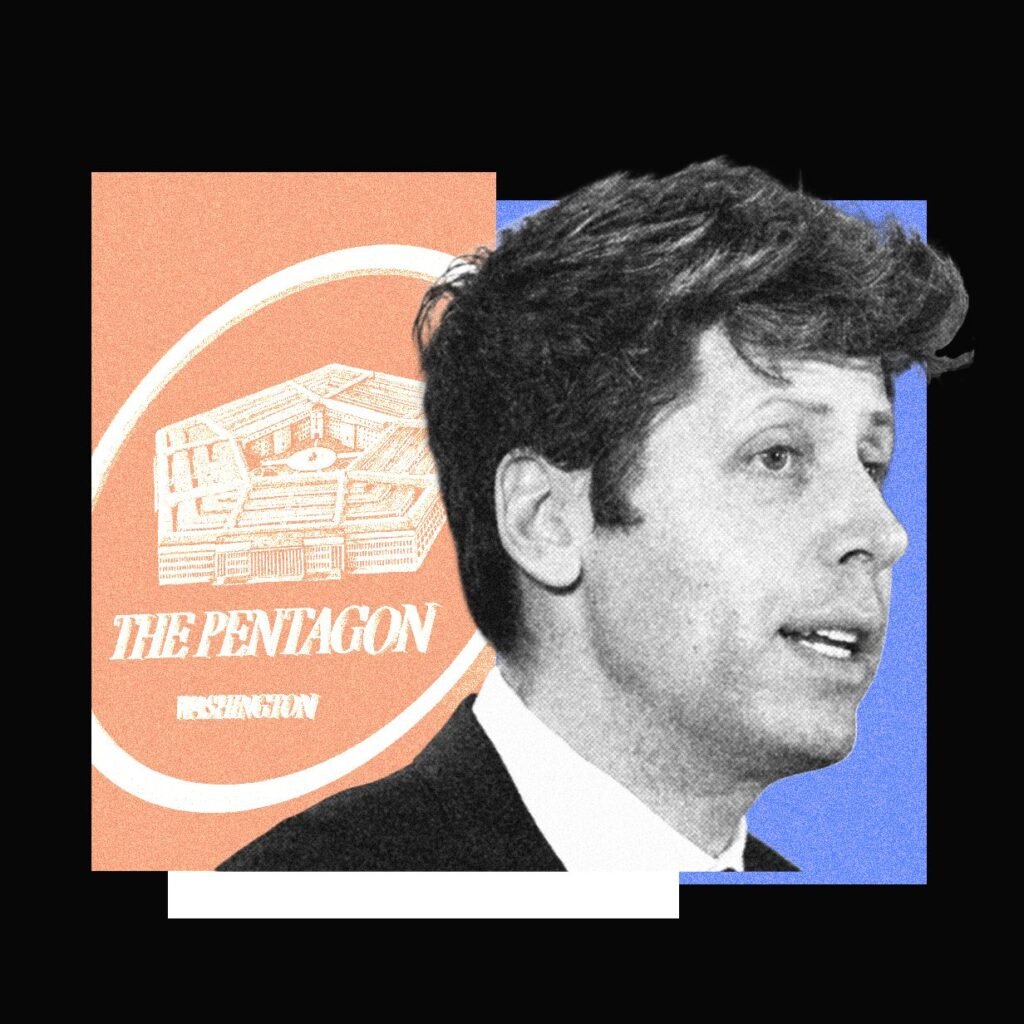

The Pentagon found a way to test OpenAI’s ChatGPT technology for military purposes even while OpenAI had banned military use entirely. Sources say the Defense Department ran experiments using Microsoft’s version of the same AI models that power ChatGPT.

This creates an awkward situation. OpenAI said no military use, but their biggest partner Microsoft was apparently helping the Pentagon test the exact same technology through a different door.

The Microsoft Loophole

OpenAI has a close partnership with Microsoft, which invested billions in the ChatGPT maker. Microsoft offers OpenAI’s AI models through its own cloud services, giving the tech giant significant influence over how the technology gets used.

The Defense Department reportedly used this Microsoft connection to experiment with the AI models before OpenAI officially changed its rules. OpenAI recently lifted its military ban, now allowing some defense applications as long as they’re not for weapons development.

This situation highlights how complicated AI governance becomes when multiple companies control different pieces of the same technology. OpenAI might set the rules, but Microsoft controls much of the distribution.

What Happens Next

OpenAI now allows military customers directly, so future Pentagon projects won’t need to go through Microsoft. But this episode raises questions about whether AI companies can really control how their technology gets used once it’s integrated into other platforms.

Expect more clarity on AI military policies as other companies face similar situations.