Anthropic is joining forces with Apple, Google, and 45+ other companies to prevent AI from being used for cyberattacks. The partnership, called Project Glasswing, will test how well their new AI model can defend against digital threats.

This matters because AI is getting scary good at hacking. The same technology that can write emails and answer questions can also find security holes in websites, create fake phishing emails, and break into computer systems. As AI gets smarter, the potential for digital chaos grows.

Fighting Fire With Fire

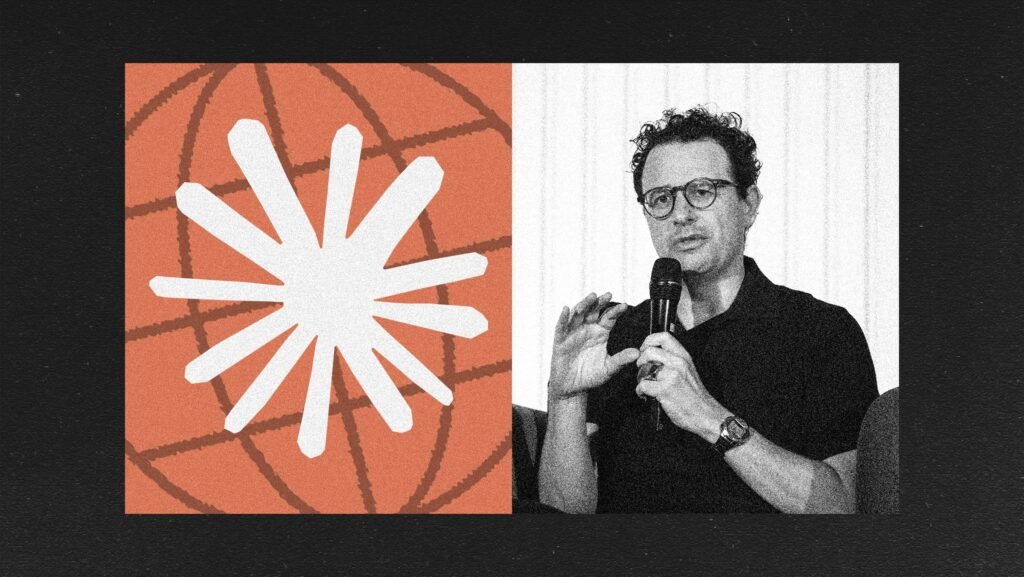

Anthroptic’s approach is clever: use AI to catch AI. Their new Claude Mythos Preview model will work like a digital security guard, scanning for threats that other AI systems might create. Think of it as teaching a robot to spot when another robot is up to no good.

The partnership brings together tech giants who usually compete against each other. Apple, Google, and dozens of other companies are sharing resources because the threat is bigger than any single company can handle alone.

Project Glasswing will test real-world scenarios – like whether AI can detect when malicious software is trying to break into a network, or if it can spot deepfake videos designed to trick people.

What Happens Next

Expect to see AI-powered security tools rolling out across major platforms in the coming months. Your email might get better at catching sophisticated scams, and websites could become harder for bad actors to exploit. The goal is to stay one step ahead of cybercriminals who are already using AI for attacks.